The Basic Picture Underlying Russell’s Account of Intelligence/Rationality

Artificial intelligence (AI) is the field devoted to building artificial animals (or at least artificial creatures that -- in suitable contexts -- appear to be animals) and, for many, artificial persons (or at least artificial creatures that -- in suitable contexts -- appear to be persons). Such goals immediately ensure that AI is a discipline of considerable interest to many philosophers, and this has been confirmed (e.g.) by the energetic attempt, on the part of numerous philosophers, to show that these goals are in fact un/attainable. On the constructive side, many of the core formalisms and techniques used in AI come out of, and are indeed still much used and refined in, philosophy: first-order logic, intensional logics suitable for the modeling of doxastic attitudes and deontic reasoning, inductive logic, probability theory and probabilistic reasoning, practical reasoning and planning, and so on. In light of this, some philosophers conduct AI research and development as philosophy.

In the present entry, the history of AI is briefly recounted, proposed definitions of the field are discussed, and an overview of the field is provided. In addition, both philosophical AI (AI pursued as and out of philosophy) and philosophy of AI are discussed, via examples of both. The entry ends with some speculative commentary regarding the future of AI.

The field of artificial intelligence (AI) officially started in 1956, launched by a small but now-famous DARPA-sponsored summer conference at Dartmouth College, in Hanover, New Hampshire. (The 50-year celebration of this conference, AI@50, was held in July 2006 at Dartmouth, with five of the original participants making it back. What happened at this historic conference figures in the final section of this entry.) Ten thinkers attended, including John McCarthy (who was working at Dartmouth in 1956), Claude Shannon, Marvin Minsky, Arthur Samuel, Trenchard Moore (apparently the lone note-taker at the original conference), Ray Solomonoff, Oliver Selfridge, Allen Newell, and Herbert Simon. From where we stand now, at the start of the new millennium, the Dartmouth conference is memorable for many reasons, including this pair: one, the term ‘artificial intelligence’ was coined there (and has long been firmly entrenched, despite being disliked by some of the attendees, e.g., Moore); two, Newell and Simon revealed a program -- Logic Theorst (LT) -- agreed by the attendees (and, indeed, by nearly all those who learned of and about it soon after the conference) to be a remarkable achievement. LT was capable of proving elementary theorems in the propositional calculus.[1]

Though the term ‘artificial intelligence’ made its advent at the 1956 conference, certainly the field of AI was in operation well before 1956. For example, in a famous Mind paper of 1950, Alan Turing argues that the question “Can a machine think?” (and here Turing is talking about standard computing machines: machines capable of computing only functions from the natural numbers (or pairs, triples, ... thereof) to the natural numbers that a Turing machine or equivalent can handle) should be replaced with the question “Can a machine be linguistically indistinguishable from a human?.” Specifically, he proposes a test, the “Turing Test” (TT) as it's now known. In the TT, a woman and a computer are sequestered in sealed rooms, and a human judge, in the dark as to which of the two rooms contains which contestant, asks questions by email (actually, by teletype, to use the original term) of the two. If, on the strength of returned answers, the judge can do no better than 50/50 when delivering a verdict as to which room houses which player, we say that the computer in question has passed the TT. Passing in this sense operationalizes linguistic indistinguishability. Later, we shall discuss the role that TT has played, and indeed coninues to play, in attempts to define AI. At the moment, though, the point is that in his paper, Turing explicitly lays down the call for building machines that would provide an existence proof of an affirmative answer to his question. The call even includes a suggestion for how such construction should proceed. (He suggests that “child machines” be built, and that these machines could then gradually grow up on their own to learn to communicate in natural language at the level of adult humans. This suggestion has arguably been followed by Rodney Brooks and the philosopher Daniel Dennett in the Cog Project: (Dennett 1994). In addition, the Spielberg/Kubrick movie A.I. is at least in part a cinematic exploration of Turing's suggestion.) The TT continues to be at the heart of AI and discussions of its foundations, as confirmed by the appearance of (Moor 2003). In fact, the TT continues to be used to define the field, as in Nilsson's (1998) position, expressed in his textbook for the field, that AI simply is the field devoted to building an artifact able to negotiate this test.

Returning to the issue of the historical record, even if one bolsters the claim that AI started at the 1956 conference by adding the proviso that ‘artificial intelligence’ refers to a nuts-and-bolts engineering pursuit (in which case Turing's philosphical discussion, despite calls for a child machine, wouldn’t exactly count as AI per se), one must confront the fact that Turing, and indeed many predecessors, did attempt to build intelligent artifacts. In Turing's case, such building was surprisingly well-understood before the advent of programmable computers: Turing wrote a program for playing chess before there were computers to run such programs on, by slavishly following the code himself. He did this well before 1950, and long before Newell (1973) gave thought in print to the possibility of a sustained, serious attempt at building a good chess-playing computer.[2]

From the standpoint of philosophy, neither the 1956 conference, nor Turing's Mind paper, come close to marking the start of AI. This is easy enough to see. For example, Descartes proposed TT (not the TT by name, of course) long before Turing was born.[3] Here's the relevant passage:

If there were machines which bore a resemblance to our body and imitated our actions as far as it was morally possible to do so, we should always have two very certain tests by which to recognise that, for all that, they were not real men. The first is, that they could never use speech or other signs as we do when placing our thoughts on record for the benefit of others. For we can easily understand a machine's being constituted so that it can utter words, and even emit some responses to action on it of a corporeal kind, which brings about a change in its organs; for instance, if it is touched in a particular part it may ask what we wish to say to it; if in another part it may exclaim that it is being hurt, and so on. But it never happens that it arranges its speech in various ways, in order to reply appropriately to everything that may be said in its presence, as even the lowest type of man can do. And the second difference is, that although machines can perform certain things as well as or perhaps better than any of us can do, they infallibly fall short in others, by which means we may discover that they did not act from knowledge, but only for the disposition of their organs. For while reason is a universal instrument which can serve for all contingencies, these organs have need of some special adaptation for every particular action. From this it follows that it is morally impossible that there should be sufficient diversity in any machine to allow it to act in all the events of life in the same way as our reason causes us to act. (Descartes 1911, p. 116)

At the moment, Descartes is certainly carrying the day.[4] Turing predicted that his test would be passed by 2000, but the fireworks-across-the-globe start of the new millennium has long since died down, and the most articulate of computers still can't meaningfully debate a sharp toddler. Moreover, while in certain focussed areas machines out-perform minds (IBM's famous Deep Blue prevailed in chess over Gary Kasparov, e.g.), minds have a (Cartesian) capacity for cultivating their expertise in virtually any sphere. (If it were announced to Deep Blue, or any current successor, that chess was no longer to be the game of choice, but rather a heretofore unplayed variant of chess, the machine would be trounced by human children of average intelligence having no chess expertise.) AI simply hasn't managed to create general intelligence; it hasn't even managed to produce an artifact indicating that eventually it will create such a thing.

But what if we consider the history of AI not from the standpoint of philosophy, but rather from the standpoint of the field with which, today, it is most closely connected? The reference here is to computer science. From this standpoint, does AI run back to well before Turing? Interestingly enough, the results are the same: we find that AI runs deep into the past, and has always had philosophy in its veins. This is true for the simple reason that computer science grew out of logic and probability theory, which in turn grew out of (and is still intertwined with) philosophy. Computer science, today, is shot through and through with logic; the two fields cannot be separated. This phenomenon has become an object of study unto itself (Halpern et al. 2001). The situation is no different when we are talking not about traditional logic, but rather about probabilistic formalisms, also a significant component of modern-day AI: These formalisms also grew out of philosophy, as nicely chronicled, in part, by Glymour (1992). For example, in the one mind of Pascal was born a method of rigorously calculating probabilities, conditional probability that plays a large role in AI to this day, and such fertile philosophico-probabilistic arguments as Pascal's wager, according to which it is irrational not to become a Christian.

That modern-day AI has its roots in philosophy, and in fact that these historical roots are temporally deeper than even Descartes’ distant day, can be seen by looking to the clever, revealing cover of the comprehensive textbook Artificial Intelligence: A Modern Approach (known in the AI community as simply AIMA for (Russell & Norvig 2002)).

What you see there is an eclectic collection of memorabilia that might be on and around the desk of some imaginary AI researcher. For example, if you look carefully, you will specifically see: a picture of Turing, a view of Big Ben through a window (perhaps R&N are aware of the fact that Turing famously held at one point that a physical machine with the power of a universal Turing machine is physically impossible: he quipped that it would have to be the size of Big Ben), a planning algorithm described in Aristotle's De Motu Animalium, Frege's fascinating notation for first-order logic, a glimpse of Lewis Carroll’s (1958) pictorial representation of syllogistic reasoning, Ramon Lull’s concept-generating wheel from his 13th-century Ars Magna, and a number of other pregnant items (including, in a clever, recursive, and bordering-on-self-congratulatory touch, a copy of AIMA itself). Though there is insufficient space here to make all the historical connections, we can safely infer from the appearance of these items that AI is indeed very, very old. Even those who insist that AI is at least in part an artifact-building enterprise must concede that, in light of these objects, AI is ancient, for it isn’t just theorizing from the perspective that intelligence is at bottom computational that runs back into the remote past of human history: Lull’s wheel, for example, marks an attempt to capture intelligence not only in computation, but in a physical artifact that embodies that computation.

One final point about the history of AI seems worth making.

It is generally assumed that the birth of modern-day AI in the 1950’s came in large part because of and through the advent of the modern high-speed digital computer. This assumption accords with common-sense. After all, AI (and, for that matter, to some degree its cousin, cognitive science, particularly computational cognitive modeling, the sub-field of cognitive science devoted to producing computational simulations of human cognition) is aimed at implementing intelligence in a computer, and it stands to reason that such a goal would be inseparably linked with the advent of such devices. However, this is only part of the story: the part that reaches back but to Turing and others (e.g., von Neuman) responsible for the first electronic computers. The other part is that, as already mentioned, AI has a particularly strong tie, historically speaking, to reasoning (logic-based and, in the need to deal with uncertainty, probabilistic reasoning). In this story, nicely told by Glymour (1992), a search for an answer to the question “What is a proof?” eventually led to an answer based on Frege’s version of first-order logic (FOL): a mathematical proof consists in a series of step-by-step inferences from one formula of first-order logic to the next. The obvious extension of this answer (and it isn’t a complete answer, given that lots of classical mathematics, despite conventional wisdom, clearly can’t be expressed in FOL; even the Peano Axioms require SOL) is to say that not only mathematical thinking, but thinking, period, can be expressed in FOL. (This extension was entertained by many logicians long before the start of information-processing psychology and cognitive science -- a fact some cognitive psychologists and cognitive scientists often seem to forget.) Today, logic-based AI is only part of AI, but the point is that this part still lives (with help from logics much more powerful, but much more complicated, than FOL), and it can be traced all the way back to Aristotle's theory of the syllogism. In the case of uncertain reasoning, the question isn’t “What is a proof?”, but rather questions such as “What is it rational to believe, in light of certain observations and probabilities?” This is a question posed and tackled before the arrival of digital computers.

So far we have been proceeding as if we have a firm grasp of AI. But what exactly is AI? Philosophers arguably know better than anyone that defining disciplines can be well nigh impossible. What is physics? What is biology? What, for that matter, is philosophy? These are remarkably difficult, maybe even eternally unanswerable, questions. Perhaps the most we can manage here under obvious space constraints is to present in encapsulated form some proposed definitions of AI. We do include a glimpse of recent attempts to define AI in detailed, rigorous fashion.

Russell and Norvig (1995, 2002), in their aforementioned AIMA

text, provide a set of possible answers to the “What is AI?”

question that has considerable currency in the field itself. These

answers all assume that AI should be defined in terms of its goals: a

candidate definition thus has the form “AI is the field that aims

at building ...” The answers all fall under a quartet of types

placed along two dimensions. One dimension is whether the goal is to

match human performance, or, instead, ideal rationality. The other

dimension is whether the goal is to build systems that reason/think,

or rather systems that act. The situation is summed up in this table:

| Human-Based | Ideal Rationality | |

|---|---|---|

| Reasoning-Based: | Systems that think like humans. | Systems that think rationally. |

| Behavior-Based: | Systems that act like humans. | Systems that act rationally. |

Please note that this quartet of possibilities does reflect (at least a significant portion of) the relevant literature. For example, philosopher John Haugeland (1985) falls into the Human/Reasoning quadrant when he says that AI is “The exciting new effort to make computers think ... machines with minds, in the full and literal sense.” Luger and Stubblefield (1993) seem to fall into the Ideal/Act quadrant when they write: “The branch of computer science that is concerned with the automation of intelligent behavior.” The Human/Act position is occupied most prominently by Turing, whose test is passed only by those systems able to act sufficiently like a human. The “thinking rationally” position is defended (e.g.) by Winston (1992).

It’s important to know that the contrast between the focus on systems that think/reason versus systems that act, while found, as we have seen, at the heart of AIMA, and at the heart of AI itself, should not be interpreted as implying that AI researchers view their work as falling all and only within one of these two compartments. Researchers who focus more or less exclusively on knowledge representation and reasoning, are also quite prepared to acknowledge that they are working on (what they take to be) a central component or capability within any one of a family of larger systems spanning the reason/act distinction. The clearest case may come from the work on planning -- an AI area traditionally making central use of representation and reasoning. For good or ill, much of this research is done in abstraction (in vitro, as opposed to in vivo), but the researchers involved certainly intend or at least hope that the results of their work can be embedded into systems that actually do things, such as, for example, execute the plans.

What about Russell and Norvig themselves? What is their answer to the

What is AI? question? They are firmly in the the “acting

rationally” camp. In fact, it’s safe to say both that they are

the chief proponents of this answer, and that they have been

remarkably successful evangelists. Their extremely

influential AIMA can be viewed as a book-length defense and

specification of the Ideal/Act category. We will look a bit later at

how Russell and Norvig lay out all of AI in terms of intelligent

agents, which are systems that act in accordance with various

ideal standards for rationality. But first let’s look a bit closer at

the view of intelligence underlying the AIMA text. We can do

so by turning to (Russell 1997). Here Russell recasts the “What

is AI?” question as the question “What is

intelligence?” (presumably under the assumption that we have a

good grasp of what an artifact is), and then he identifies

intelligence with rationality. More specifically, Russell sees

AI as the field devoted to building intelligent agents, which

are functions taking as input tuples of percepts from the external

environment, and producing behavior (actions) on the basis of these

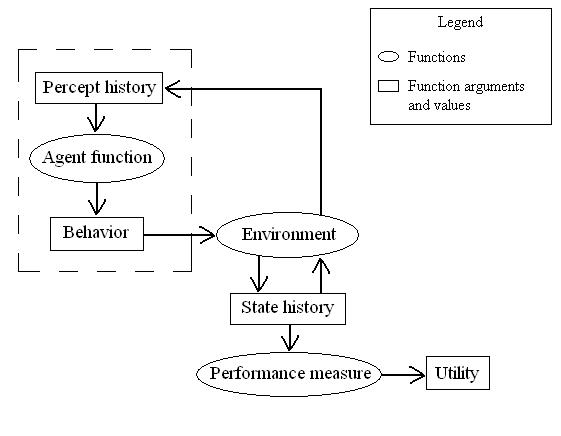

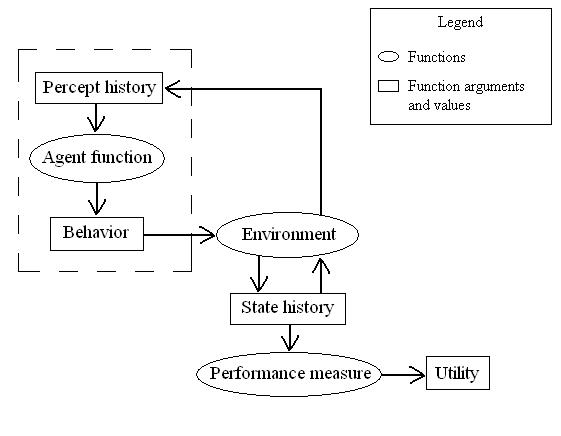

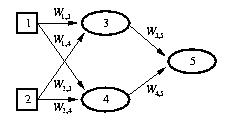

percepts. Russell’s overall picture is this one:

Of course, as Russell points out, it’s usually not possible to actually build perfectly rational agents. For example, though it’s easy enough to specify an algorithm for playing invincible chess, it’s not feasible to implement this algorithm. What traditionally happens in AI is that programs that are -- to use Russell’s apt terminology -- calculatively rational are constructed instead: these are programs that, if executed infinitely fast, would result in perfectly rational behavior. In the case of chess, this would mean that we strive to write a program that runs an algorithm capable, in principle, of finding a flawless move, but we add features that truncate the search for this move in order to play within intervals of digestible duration.

Russell himself champions a new brand of intelligence/rationality for

AI; he calls this brand bounded optimality. To understand

Russell’s view, first we follow him in introducing a distinction: we

say that agents have two components: a program, and a machine upon

which the program runs. We write Agent(P,M) to denote the

agent function implemented by program P running on

machine M. Now, let  (M) denote the

set of all programs P that can run on machine M.

The bounded optimal program Popt then is:

(M) denote the

set of all programs P that can run on machine M.

The bounded optimal program Popt then is:

V(Agent(P,M),E,U)

V(Agent(P,M),E,U) You can understand this equation in terms of any of the mathematical idealizations for standard computation. For example, machines can be identified with Turing machines minus instructions (i.e., TMs are here viewed architecturally only: as having tapes divided into squares upon which symbols can be written, read/write heads capable of moving up and down the tape to write and erase, and control units which are in one of a finite number of states at any time), and programs can be identified with instructions in the Turing machine model (telling the machine to write and erase symbols, depending upon what state the machine is in). So, if you are told that you must “program” within the constraints of a 22-state Turing machine, you could search for the “best” program given those constraints. In other words, you could strive to find the optimal program within the bounds of the 22-state architecture. Russell’s (1997) view is thus that AI is the field devoted to creating optimal programs for intelligent agents, under time and space constraints on the machines implementing these programs.[5]

It should be mentioned that there is a different, much more straightforward answer to the “What is AI?” question. This answer, which goes back to the days of the original Dartmouth conference, was expressed by, among others, Newell (1973), one of the grandfathers of modern-day AI (recall that he attended the 1956 conference); it is:

AI is the field devoted to building artifacts that are intelligent, where ‘intelligent’ is operationalized through intelligence tests (such as the Wechsler Adult Intelligence Scale), and other tests of mental ability (including, e.g., tests of mechanical ability, creativity, and so on).

Though few are aware of this now, this answer was taken quite seriously for a while, and in fact underlied one of the most famous programs in the history of AI: the ANALOGY program of Evans (1968), which solved geometric analogy problems of a type seen in many intelligence tests. An attempt to rigorously define this forgotten form of AI (as what they dub Psychometric AI), and to resurrect it from the days of Newell and Evans, is provided by Bringsjord and Schimanski (2003). Recently, a sizable private investment has been made in the ongoing attempt, known as Project Halo, to build a “digital Aristotle”, in the form of a machine able to excel on standardized tests such at the AP exams tackled by US high school students (Friedland et al. 2004). In addition, researchers at Northwestern have forged a connection between AI and tests of mechanical ability (Klenk et al. 2005).

In the end, as is the case with any discipline, to really know precisely what that discipline is requires you to, at least to some degree, dive in and do, or at least dive in and read. Two decades ago such a dive was quite manageable. Today, because the content that has come to constitute AI has mushroomed, the dive (or at least the swim after it) is a bit more demanding. Before looking in more detail at the content that composes AI, we take a quick look at the explosive growth of AI.

First, a point of clarification. The growth of which we speak is not a shallow sort correlated with amount of funding provided for a given sub-field of AI. That kind of thing happens all the time in all fields, and can be triggered by entirely political and financial changes designed to grow certain areas, and diminish others. Rather, we are speaking of an explosion of deep content: new material which someone intending to be conversant with the field needs to know. Relative to other fields, the size of the explosion may or may not be unprecedented. (Though it should perhaps be noted that an analogous increase in philosophy would be marked by the development of entirely new formalisms for reasoning, reflected in the fact that, say, longstanding philosophy textbooks like Copi’s (2004) Introduction to Logic are dramatically rewritten and enlarged to include these formalisms, rather than remaining anchored to essentially immutable core formalisms, with incremental refinement around the edges through the years.) But it certainly appears to be quite remarkable, and is worth taking note of here, if for no other reason than that AI’s near-future will revolve in significant part around whether or not the new content in question forms a foundation for new long-lived research and development that would not otherwise obtain.

Were you to have begun formal coursework in AI in 1985, your textbook would likely have been Eugene Charniak's comprehensive-at-the-time Introduction to Artificial Intelligence (Charniak & McDermott 1985). This book gives a strikingly unified presentation of AI -- as of the early 1980’s. This unification is achieved via first-order logic (FOL), which runs throughout the book and binds things together. For example: In the chapter on computer vision (3), everyday objects like bowling balls are represented in FOL. In the chapter on parsing language (4), the meaning of words, phrases, and sentences are identified with corresponding formulae in FOL (e.g., they reduce “the red block” to FOL on page 229). In Chapter 6, “Logic and Deduction”, everything revolves around FOL and proofs therein (with an advanced section on nonmonotonic reasoning couched in FOL as well). And Chapter 8 is devoted to abduction and uncertainty, where once again FOL, not probability theory, is the foundation. It’s clear that FOL renders (Charniak & McDermott 1985) esemplastic. Today, due to the explosion of content in AI, this kind of unification is no longer possible.

Though there is no need to get carried away in trying to quantify the explosion of AI content, it isn't hard to begin to do so for the inevitable skeptics. (Charniak & McDermott 1985) has 710 pages. The first edition of AIMA, published ten years later in 1995, has 932 pages, each with about 20% more words per page than C&M's book. The second edition of AIMA weighs in at a backpack-straining 1023 pages, with new chapters on probabilistic language processing, and uncertain temporal reasoning.

The explosion of AI content can also be seen topically. C&M cover nine highest-level topics, each in some way tied firmly to FOL implemented in (a dialect of) the programming language Lisp, and each (with the exception of Deduction, whose additional space testifies further to the centrality of FOL) covered in one chapter:

In AIMA the expansion is obvious. For example, Search is given three full chapters, and Learning is given four chapters. AIMA also includes coverage of topics not present in C&M's book; one example is robotics, which is given its own chapter in AIMA. In the second edition, as mentioned, there are two new chapters: one on constraint satisfaction that constitutes a lead-in to logic, and one on uncertain temporal reasoning that covers hidden Markov models, Kalman filters, and dynamic Bayesian networks. A lot of other additional material appears in new sections introduced into chapters seen in the first edition. For example, the second edition includes coverage of propositional logic as a bona fide framework for building significant intelligent agents. In the first edition, such logic is introduced mainly to facilitate the reader's understanding of full FOL.

One of the remarkable aspects of (Charniak & McDermott 1985) is this: The authors say the central dogma of AI is that “What the brain does may be thought of at some level as a kind of computation” (p. 6). And yet nowhere in the book is brain-like computation discussed. In fact, you will search the index in vain for the term ‘neural’ and its variants. Please note that the authors are not to blame for this. A large part of AI’s growth has come from formalisms, tools, and techniques that are, in some sense, brain-based, not logic-based. A recent paper that conveys the importance and maturity of neurocomputation is (Litt et al. 2006). (Growth has also come from a return of probabilistic techniques that had withered by the mid-70’s and 80’s. More about that momentarily, in the next “resurgence” section.)

One very prominent class of non-logicist formalism does make an explicit nod in the direction of the brain: viz., artificial neural networks (or as they are often simply called, neural networks, or even just neural nets). (The structure of neural networks is discussed below). Because Minsky and Pappert's (1969) Perceptrons led many (including, specifically, many sponsors of AI research and development) to conclude that neural networks didn't have sufficient information-processing power to model human cognition, the formalism was pretty much universally dropped from AI. However, Minsky and Pappert had only considered very limited neural networks. Connectionism, the view that intelligence consists not in symbolic processing, but rather non-symbolic processing at least somewhat like what we find in the brain (at least at the cellular level), approximated specifically by artificial neural networks, came roaring back in the early 1980’s on the strength of more sophisticated forms of such networks, and soon the situation was (to use a metaphor introduced by John McCarthy) that of two horses in a race toward building truly intelligent agents.

If one had to pick a year at which connectionism was resurrected, it would certainly be 1986, the year Parallel Distributed Processing (Rumelhart & McClelland 1986) appeared in print. The rebirth of connectionism was specifically fueled by the back-propagation algorithm over neural networks, nicely covered in Chatper 20 of AIMA. The symbolicist/connectionist race led to a spate of lively debate in the literature (e.g., Smolensky 1988, Bringsjord 1991), and some AI engineers have explicitly championed a methodology marked by a rejection of knowledge representation and reasoning. For example, Rodney Brooks was such an engineer; he wrote the well-known “Intelligence Without Representation” (1991), and his Cog Project, to which we referred above, is arguably an incarnation of the premeditatedly non-logicist approach. Increasingly, however, those in the business of building sophisticated systems find that both logicist and more neurocomputational techniques are required (Wermter & Sun 2001).[6] In addition, the neurocomputational paradigm today includes connectionism only as a proper part, in light of the fact that some of those working on building intelligent systems strive to do so by engineering brain-based computation outside the neural network-based approach (e.g., Granger 2004a, 2004b).

There is a second dimension to the explosive growth of AI: the explosion in popularity of probabilistic methods that aren’t neurocomputational in nature, in order to formalize and mechanize a form of non-logicist reasoning in the face of uncertainty. Interestingly enough, it is Eugene Charniak himself who can be safely considered one of the leading proponents of an explicit, premeditated turn away from logic to statistical techniques. His area of specialization is natural language processing, and whereas his introductory textbook of 1985 gave an accurate sense of his approach to parsing at the time (as we have seen, write computer programs that, given English text as input, ultimately infer meaning expressed in FOL), this approach was abandoned in favor of purely statistical approaches (Charniak 1993). At the recent AI@50 conference, Charniak boldly proclaimed, in a talk tellingly entitled “Why Natural Language Processing is Now Statistical Natural Language Processing,” that logicist AI is moribund, and that the statistical approach is the only promising game in town -- for the next 50 years.[7] The chief source of energy and debate at the conference flowed from the clash between Charniak's probabilistic orientation, and the original logicist orientation, upheld at the conference in question by John McCarthy and others.

AI's use of probability theory grows out of the standard form of this theory, which grew directly out of technical philosophy and logic. This form will be familiar to many philosophers, but let's review it quickly now, in order to set a firm stage for making points about the new probabilistic techniques that have energized AI.

Just as in the case of FOL, in probability theory we are concerned

with declarative statements, or propositions, to which degrees

of belief are applied; we can thus say that both logicist and

probabilistic approaches are symbolic in nature. More specifically,

the fundamental proposition in probability theory is a random

variable, which can be conceived of as an aspect of the world

whose status is initially unknown. We usually capitalize the names of

random variables, though we reserve p, q, r,

... as such names as well. In a particular murder investigation

centered on whether or not Mr. Black committed the crime, the random

variable Guilty might be of concern. The detective may be

interested as well in whether or not the murder weapon -- a particular

knife, let us assume -- belongs to Black. In light of this, we might

say that

Weapon = true if it does, and Weapon = false if it

doesn't. As a notational convenience, we can write weapon

and  weapon for these two cases, respectively;

and we can use this convention for other variables of this type.

weapon for these two cases, respectively;

and we can use this convention for other variables of this type.

The kind of variables we have described so far are Boolean, because their domain is simply {true, false}. But we can generalize and allow discrete random variables, whose values are from any countable domain. For example, PriceTChina might be a variable for the price of (a particular, presumably) tea in China, and its domain might be {1, 2, 3, 4, 5}, where each number here is in US dollars. A third type of variable is continuous; its domain is either the reals, or some subset thereof.

We say that an atomic event is an assignment of particular values from the appropriate domains to all the variables composing the (idealized) world. For example, in the simple murder investigation world introduced just above, we have two Boolean variables, Guilty and Weapon, and there are just four atomic events. Note that atomic events have some obvious properties. For example, they are mutually exclusive, exhaustive, and logically entail the truth or falsity of every proposition. Usually not obvious to beginning students is a fourth property, namely, any proposition is logically equivalent to the disjunction of all atomic events that entail that proposition.

Prior probabilities correspond to a degree of belief accorded a proposition in the complete absence of any other information. For example, if the prior probability of Black's guilt is .2, we write

or simply P(guilty) = .2. It is often convenient to have a notation allowing one to refer economically to the probabilities of all the possible values for a random variable. For example, we can write

as an abbreviation for the five equations listing all the possible prices for tea in China. We can also write

In addition, as further convenient notation, we can write P(Guilty, Weapon) to denote the probabilities of all combinations of values of the relevant set of random variables. This is referred to as the joint probability distribution of Guilty and Weapon. The full joint probability distribution covers the distribution for all the random variables used to describe a world. Given our simple murder world, we have 20 atomic events summed up in the equation

The final piece of the basic language of probability theory corresponds to conditional probabilities. Where p and q are any propositions, the relevant expression is P(p|q), which can be interpreted as “the probability of p, given that all we know is q.” For example,

says that if the murder weapon belongs to Black, and no other information is available, the probability that Black is guilty is .7.

Andrei Kolmogorov showed how to construct probability theory from three axioms that make use of the machinery now introduced, viz.,

Probabilistic inference consists in computing, from observed evidence expressed in terms of probability theory, posterior probabilities of propositions of interest. For a good long while, there have been algorithms for carrying out such computation. These algorithms precede the resurgence of probabilistic techniques in the 1990’s. (Chapter 13 of AIMA presents a number of them.) For example, given the Kolmogorov axioms, here is a straightforward way of computing the probability of any propostion, using the full joint distribution giving the probabilities of all atomic events: Where p is some proposition, let α(p) be the disjunction of all atomic events in which p holds. Since the probability of a proposition (i.e., P(p)) is equal to the sum of the probabilities of the atomic events in which it holds, we have an equation that provides a method for computing the probability of any proposition p, viz.,

Unfortunately, there were two serious problems infecting this original probabilistic approach: One, the processing in question needed to take place over paralyzingly large amounts of information (enumeration over the entire distribution is required). And two, the expressivity of the approach was merely propositional. (It was by the way the philosopher Hilary Putnam (1963) who pointed out that there was a price to pay in moving to the first-order level. The issue is not discussed herein.) Everything changed with the advent of a new formalism that marks the marriage of probabilism and graph theory: Bayesian networks (also called belief nets). The pivotal text was (Pearl 1988).

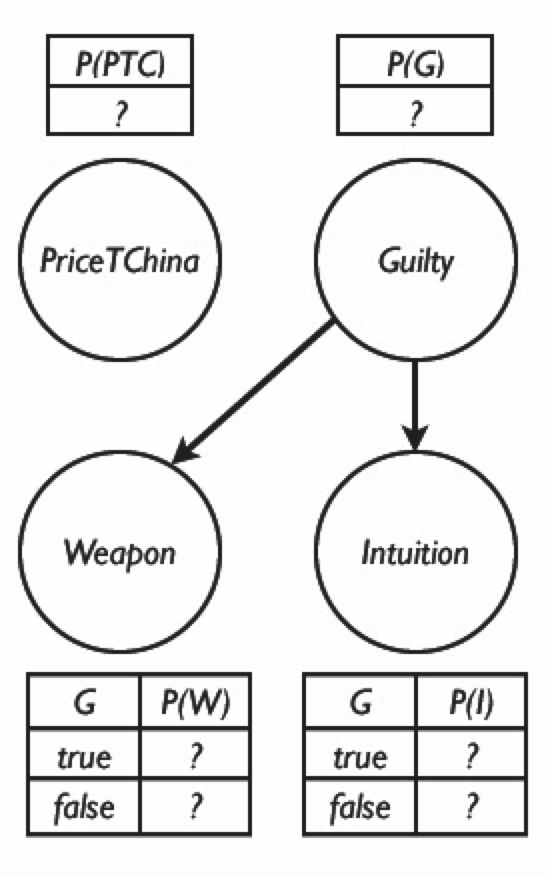

To explain Bayesian networks, and to provide a contrast between

Bayesian probabilistic inference, and argument-based approaches that

are likely to be attractive to classically trained philosophers, let

us build upon the example of Black introduced above. Suppose that we

want to compute the posterior probability of the guilt of our murder

suspect, Mr. Black, from observed evidence. We have three Boolean

variables in play:

Guilty,

Weapon, and

Intuition.

Weapon is true or false based on whether or not a murder weapon

(the knife, recall) belonging to Black is found at the scene of the

bloody crime. The variable

Intuition is true provided that the very experienced detective

in charge of the case, Watson, has an intuition, without examining any

physical evidence in the case, that Black is guilty;  intuition holds just in case Watson has no

intuition either way. Here is a table that holds all the (eight)

atomic events in the scenario so far:

intuition holds just in case Watson has no

intuition either way. Here is a table that holds all the (eight)

atomic events in the scenario so far:

| weapon |  weapon weapon |

|||

|---|---|---|---|---|

| intuition |  intuition intuition |

intuition |  intuition intuition |

|

| guilty | 0.208 | 0.016 | 0.072 | 0.008 |

guilty guilty |

0.011 | 0.063 | 0.134 | 0.486 |

Were we to add the aforeintroduced discrete random variable PriceTChina, we would of course have 40 events, corresponding in tabular form to the preceding table associated with each of the five possible values of PriceTChina. That is, there are 40 events in

Bayesian networks provide a economical way to represent the situation. Such networks are directed, acyclic graphs in which nodes correspond to random variables. When there is a directed link from node Ni to node Nj, we say that Ni is the parent of Nj. With each node Ni there is a corresponding conditional probability distribution

where, of course, Parents(Ni) denotes the parents of

Ni. The following figure shows such a network for

the case we have been considering. The specific probability

information is omitted; readers should at this point be able to

readily calculate it using the machinery provided above.

Notice the economy of the network, in striking contrast to the prospect, visited above, of listing all 40 possibilities. The price of tea in China is presumed to have no connection to the murder, and hence the relevant node is isolated. In addition, only some l probability info is included, corresponding to the relevant tables shown in the figure (typically termed a conditional probability table). And yet from a Bayesian network, every entry in the full joint distribution can be easily calculated, as follows. First, for each node/variable Ni we write Ni = ni to indicate an assignment to that node/variable. The conjunction of the specific assignments to every variable in the full joint probability distribution can then be written as

...

...  Nn = nn)

Nn = nn)

Earlier, we observed that the full joint distribution can be used to infer an answer to queries about the domain. Given this, it follows immediately that Bayesian networks have the same power. But in addition, there are much much efficient methods over such networks for answering queries. These methods, and increasing the expressivity of networks toward the first-order case, are outside the scope of the present entry. Readers are directed to AIMA, or any of the other textbooks affirmed in this entry (see note 8).

Before concluding this section, it is probably worth noting that, from the standpoint of philosophy, a situation such as the murder investigation we have exploited above would often be analyzed into arguments, and strength factors, not into numbers to be crunched by purely arithmetical procedures. For example, in the epistemology of Roderick Chisholm, as presented his Theory of Knowledge (Chisholm 1966, 1977), Detective Watson might classify a proposition like Black committed the murder. as counterbalanced if he was unable to take a find a compelling argument either way, or perhaps probable if the murder weapon turned out to belong to Black. Such categories cannot be found on a continuum from 0 to 1, and they are used in articulating arguments for or against Black's guilt. Argument-based approaches to uncertain and defeasible reasoning are virtually non-existent in AI. One exception is Pollock's approach, covered below. This approach is Chisholmian in nature.

There are a number of ways of “carving up” AI. By far the most prudent and productive way to summarize the field is to turn yet again to the AIMA text, by any metric a masterful, comprehensive overview of the field.[8]

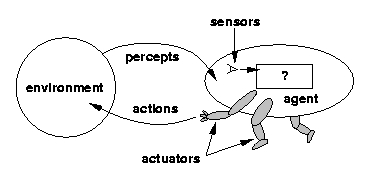

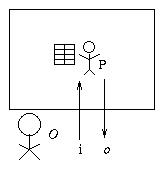

As Russell and Norvig (2002) tell us in the Preface of AIMA:

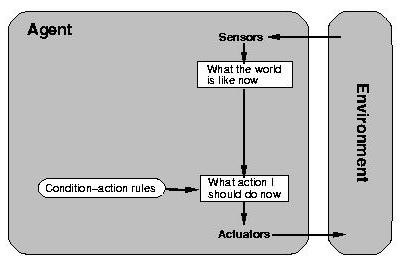

[Our] main unifying theme is the idea of an intelligent agent. We define AI as the study of agents that receive percepts from the environment and perform actions. Each such agent implements a function that maps percept sequences to actions, and we cover different ways to represent these functions... (Russell & Norvig 2002, vii)The basic picture is thus summed up in this figure:

The content of AIMA derives, essentially, from fleshing out

this picture; that is,

As the book progresses, agents get increasingly sophisticated, and the

implementation of the function they represent thus draws from more and

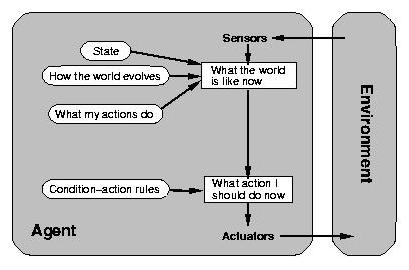

more of what AI can currently muster. The following figure gives an

overview of an agent that is a bit smarter than the simple reflex

agent. This smarter agent has the ability to internally model the

outside world, and is therefore not simply at the mercy of what can at

the moment be directly sensed.

There are eight parts to

AIMA. As the reader passes through these parts, she is

introduced to agents that take on the powers discussed in each part.

Part I is an introduction to the agent-based view. Part II is

concerned with giving an intelligent agent the capacity to think ahead

a few steps in clearly defined environtments. Examples here include

agents able to successfully play games of perfect information, such as

chess. Part III deals with agents that have declarative knowledge and

can reason in ways that will be quite familiar to most philosophers

and logicians (e.g., knowledge-based agents deduce what actions should

be taken to secure their goals). Part IV of the book outfits agents

with the power to handle uncertainty by reasoning in probabilistic

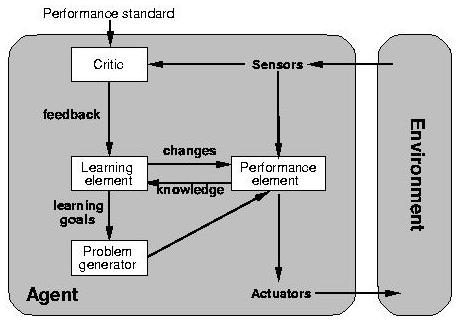

fashion. In Part VI, agents are given a capacity to learn. The

following figure shows the overall structure of a learning agent.

The final set of powers agents are given allow them to communicate.

These powers are covered in Part VII.

Philosophers who patiently travel the entire progression of

increasingly smart agents will no doubt ask, when reaching the end of

Part VII, if anything is missing. Are we given enough, in general, to

build an artificial person, or is there enough only to build a mere

animal? This question is implicit in the following from Charniak and

McDermott (1985):

To their credit, Russell & Norvig, in AIMA's Chapter 27,

“AI: Present and Future,” consider this question, at least

to some degree. They do so by considering some challenges to AI that

have hitherto not been met. One of these challenges is described by

R&N as follows:

This specific challenge is actually merely the foothill before a

dizzyingly high mountain that AI must eventually somehow manage to

climb. That mountain, put simply, is reading. Despite the

fact that, as noted, Part IV of AIMA is devoted to machine

learning, AI, as it stands, offers next to nothing in the way of a

mechanization of learning by reading. Yet when you think about it,

reading is probably the dominant way you learn at this stage in your

life. Consider what you're doing at this very moment. It’s a

good bet that you are reading this sentence because, earlier, you set

yourself the goal of learning about the field of AI. Yet the formal

models of learning provided in AIMA's Part IV (which are all

and only the models at play in AI) cannot be applied to learning by

reading.[9] These

models all start with a function-based view of learning.

According to this view, to learn is almost invariably to produce an

underlying function f on the basis of a restricted set of pairs

(a1, f(a1)), (a2,

f(a2)), ..., (an, f(an)). For

example, consider receiving inputs consisting of 1, 2, 3, 4, and 5,

and corresponding range values of 1, 4, 9, 16, and 25; the goal is to

“learn” the underlying mapping from natural numbers to

natural numbers. In this case, assume that the underlying function

is n2, and that you do “learn“ it. While

this narrow model of learning can be productively applied to a number

of processes, the process of reading isn’t one of them. Learning by

reading cannot (at least for the foreseeable future) be modeled as

divining a function that produces argument-value pairs. Instead, your

reading about AI can pay dividends only if your knowledge has

increased in the right way, and if that knowledge leaves you

poised to be able to produce behavior taken to confirm sufficient

mastery of the subject area in question. This behavior can range from

correctly answering and justifying test questions regarding AI, to

producing a robust, compelling presentation or paper that signals your

achievement.

Two points deserve to be made about machine reading. First, it may

not be clear to all readers that reading is an ability that is central

to intelligence. The centrality derives from the fact that

intelligence requires vast knowledge. We have no other means of

getting systematic knowledge into a system than to get it in from

text, whether text on the web, text in libraries, newspapers, and so

on. You might even say that the big problem with AI has been that

machines really don't know much compared to humans. That can only be

because of the fact that humans read (or hear: illiterate people can

listen to text being uttered and learn that way). Either machines

gain knowledge by humans manually encoding and inserting knowledge, or

by reading and listening. These are brute facts. (We leave aside

supernatural techniques, of course. Oddly enough, Turing didn't: he

seemed to think ESP should be discussed in connection with the powers

of minds and machines. See (Turing 1950.))

Now for the second point. Humans able to read have invariably also

learned a language, and learning languages has been modeled in

conformity to the function-based approach adumbrated just above

(Osherson et al. 1986). However, this doesn't entail that an

artificial agent able to read, at least to a significant degree, must

have really and truly learned a natural language. AI is first and

foremost concerned with engineering computational artifacts that

measure up to some test (where, yes, sometimes that test is from the

human sphere), not with whether these artifacts process information in

ways that match those present in the human case. It may or may not be

necessary, when engineering a machine that can read, to imbue that

machine with human-level linguistic competence. The issue is

empirical, and as time unfolds, and the engineering is pursued, we

shall no doubt see the issue settled.

It would seem that the greatest challenges facing AI are ones the

field apparently hasn't even come to grips with yet. Ssome mental

phenomena of paramount importance to many philosohers of mind and

neuroscience are simply missing from

AIMA. Two examples are subjective consciousness and

creativity. The former is only mentioned in passing in AIMA,

but subjective consciousness is the most important thing in our lives

-- indeed we only desire to go on living because we wish to go on

enjoying subjective states of certain types. Moreover, if human minds

are the product of evolution, then presumably phenomenal consciousness

has great survival value, and would be of tremendous help to a robot

intended to have at least the behavioral repertoire of the first

creatures with brains that match our own (hunter-gatherers; see Pinker

1997). Of course, subjective consciousness is largely missing from

the sister fields of cognitive psychology and computational cognitive

modeling as well.[10]

To some readers, it might seem in the very least tendentious to point

to subjective consciousness as a major challenge to AI that it has yet

to address. These readers might be of the view that pointing to this

problem is to look at AI through a distinctively philosophical prism,

and indeed a controversial philosophical standpoint.

But as its literature makes clear, AI measures itself by looking to

animals and humans and picking out in them remarkable mental powers,

and by then seeing if these powers can be mechanized. Arguably the

power most important to humans (the capacity to experience) is nowhere

to be found on the target list of most AI researchers. There may be a

good reason for this (no formalism is at hand, perhaps), but there is

no denying the state of affairs in question obtains, and that, in

light of how AI measures itself, that it’s worrisome.

As to creativity, it's quite remarkable that the power we most praise

in human minds is nowhere to be found in AIMA. Just as in

(Charniak & McDermott 1985) one cannot find ‘neural’ in

the index, ‘creativity’ can't be found in the index of

AIMA. This is particularly odd because many AI researchers

have in fact worked on creativity (especially those coming out of

philosophy; e.g., Boden 1994, Bringsjord & Ferrucci 2000).

Although the focus has been on AIMA, any of its counterparts

could have been used. As an example, consider

Artificial Intelligence: A New Synthesis, by Nils Nilsson.

(A synopsis and TOC are available at

Http://print.google.com/print?id=LIXBRwkibdEC&lpg=1&prev=.)

As in the case of AIMA, everything here revolves around a

gradual progression from the simplest of agents (in Nilsson's case,

reactive agents), to ones having more and more of those powers

that distinguish persons. Energetic readers can verify that there is

a striking parallel between the main sections of Nilsson's book and

AIMA. In addition, Nilsson, like Russell and Norvig, ignores

phenomenal consciousness, reading, and creativity. None of the three

are even mentioned.

A final point to wrap up this section. It seems quite plausible to

hold that there is a certain inevitability to the structure of an AI

textbook, and the apparent reason is perhaps rather interesting. In

personal conversation, Jim Hendler, a well-known AI researcher who is

one of the main innovators behind Semantic Web (Berners-Lee, Hendler,

Lassila 2001), an under-development “AI-ready” version of

the World Wide Web, has said that this inevitability can be rather

easily displayed when teaching Introduction to AI; here's how. Begin

by asking students what they think AI is. Invariably, many students

will volunteer that AI is the field devoted to building artificial

creatures that are intelligent. Next, ask for examples of intelligent

creatures. Students always respond by giving examples across a

continuum: simple multi-celluar organisms, insects, rodents, lower

mammals, higher mammals (culminating in the great apes), and finally

human persons. When students are asked to describe the differences

between the creatures they have cited, they end up essentially

describing the progression from simple agents to ones having our

(e.g.) communicative powers. This progression gives the skeleton of

every comprehensive AI textbook. Why does this happen? The answer

seems clear: it happens because we can’t resist conceiving of AI

in terms of the powers of extant creatures with which we are familiar.

At least at present, persons, and the creatures who enjoy only bits

and pieces of personhood, are -- to repeat -- the measure of AI.

SEP already contains a separate entry entitled Logic and Artificial

Intelligence, written by Thomason. This entry is focused on

non-monotonic reasoning, and reasoning about time and change; the

entry also provides a history of the early days of logic-based AI,

making clear the contributions of those who founded the tradition

(e.g., John McCarthy and Pat Hayes; see their seminal 1969 paper).

Reasoning based on classical deductive logic is monotonic; that is, if

The formalisms and techniques discussed in Logic and Artificial

Intelligence have now reached, as of 2006, a level of impressive

maturity -- so much so that in various academic and corporate

laboratories, implementations of these formalisms and techniques can

be used to engineer robust, real-world software. It is strongly

recommend that readers who have assimilated Thomason's entry and have

an interest to learn where AI stands in these areas consult (Mueller

2006), which provides, in one volume, integrated coverage of

non-monotonic reasoning (in the form, specifically, of

circumscription, introduced by Thomason), and reasoning about time and

change in the situation and event calculi. (The former calculus is

also introduced by Thomason. In the second, timepoints are included,

among other things.) The other nice thing about (Mueller 2006) is

that the logic used is multi-sorted first-order logic (MSL), which has

unificatory power that will be known to and appreciated by many

technical philosophers and logicians (Manzano 1996).

In the present entry, three topics of importance in AI not covered in

Logic and

Artificial Intelligence are mentioned. They are:

Detailed accounts of logicist AI that fall under the agent-based

scheme can be found in (Nilsson 1991, Bringsjord & Ferrucci

1998).[11].

The core idea is that an intelligent agent receives percepts from the

external world in the form of formulae in some logical system (e.g.,

first-order logic), and infers, on the basis of these percepts and its

knowledge base, what actions should be performed to secure the agent's

goals. (This is of course a barbaric simplification. Information

from the external world is encoded in formulae, and

transducers to accomplish this feat may be components of the agent.)

To clarify things a bit, we consider, briefly, the logicist view in

connection with arbitrary

logical systems

When the logical system referred to is clear from context, or when we don't

care about which logical system is involved, we can simply write

Each logical system, in its formal semantics, will include objects

designed to represent ways the world pointed to by formulae in this

system can be. Let these ways be denoted by Wi

We extend this to a set of formulas in the natural way:

Wi

To begin, we assume that the human designer, after studying the world,

uses the language of a particular logical system to give to our agent

an initial set of beliefs Δ0 about what this world is

like. In doing so, the designer works with a formal model of this

world, W, and ensures that W

The cycle continues when the agent ACTS on the environment, in

an attempt to secure its goals. Acting, of course, can cause changes

to the environment. At this point, the agent SENSES the

environment, and this new information Γ1 factors into

the process of adjustment, so that

It may strike you as preposterous that logicist AI be touted as an

approach taken to replicate

all of cognition. Reasoning over formulae in some logical

system might be appropriate for computationally capturing high-level

tasks like trying to solve a math problem (or devising an outline for

an entry in the Stanford Encyclopedia of Philosophy), but how could

such reasoning apply to tasks like those a hawk tackles when swooping

down to capture scurrying prey? In the human sphere, the task

successfully negotiated by athletes would seem to be in the same

category. Surely, some will declare, an outfielder chasing down a fly

ball doesn’t prove theorems to figure out how to pull off a diving

catch to save the game!

Needless to say, such a declaration has been carefully considered by

logicists. For example, Rosenschein and Kaelbling (1986) describe a

method in which logic is used to specify finite state machines. These

machines are used at “run time” for rapid, reactive

processing. In this approach, though the finite state machines

contain no logic in the traditional sense, they are produced by logic

and inference. Recently, real robot control via first-order theorem

proving has been demonstrated by Amir and Maynard-Reid (1999, 2000,

2001). In fact, you can

download

version 2.0 of the software that makes this approach real for a Nomad

200 mobile robot in an office environment. Of course, negotiating an

office environment is a far cry from the rapid adjustments an

outfielder for the Yankees routinely puts on display, but certainly

it’s an open question as to whether future machines will be able to

mimic such feats through rapid reasoning. The question is open if for

no other reason than that all must concede that the constant increase

in reasoning speed of first-order theorem provers is breathtaking.

(For up-to-date news on this increase, visit and monitor the

TPTP site.)

There is no known reason why the software engineering in question

cannot continue to produce speed gains that would eventually allow

an artificial creature to catch a fly ball by processing information

in purely logicist fashion.

Now we come to the second topic related to logicist AI that warrants

mention herein: common logic and the intensifying quest for

interoperability between logic-based systems using different logics.

Only a few brief comments are offered. Readers wanting more can

explore the links provided in the course of the summary.

To begin, please understand that AI has always been very much much at

the mercy of the vicissitudes of funding provided to researchers in

the field by the United States Department of Defense (DoD). (The

inaugural 1956 workshop was funded by DARPA, and many representatives

from this organization attended

AI@50.)

It’s this fundamental fact that causally contributed to the

temporary hibernation of AI carried out on the basis of artificial

neural networks: When Minsky and Pappert (1959) bemoaned the

limitations of neural networks, it was the funding agencies that held

back money for research based upon them. Since the late 1950's it's

safe to say that the DoD has sponsored the development of many logics

intended to advance AI and lead to helpful applications. Recently, it

has occurred to many in the DoD that this sponsorship has led to a

plethora of logics between which no translation can occur. In short,

the situation is a mess, and now real money is being spent to try to

fix it, through standardization and machine translation

(between logical, not natural, languages).

The standardization is coming chiefly through what is known as

Common Logic (CL), and variants

thereof. (CL is soon to be an ISO standard. ISO is the International

Standards Organization.) Philosophers interested in logic,

and of course logicians, will find CL to be quite fascinating. (From

an historical perspective, the advent of CL is interesting in no small

part because the person spearheading it is none other than Pat Hayes,

the same Hayes who, as we have seen, worked with McCarthy to establish

logicist AI in the 1960’s. Though Hayes was not at the original 1956

Dartmouth conference, he certainly must be regarded as one of the

founders of contemporary AI.) One of the interesting things about CL,

at least as I see it, is that it signifies a trend toward the marriage

of logics, and programming languages and environments. Another system

that is a logic/programming hybrid is

Athena,

which can be used as a programming language, and is at the same time a

form of MSL. Athena is known as a denotational proof

language (Arkoudas 2000).

How is interoperability between two systems to be enabled by CL?

Suppose one of these systems is based on logic L, and the other

on L'. (To ease exposition, assume that both logics are

first-order.) The idea is that a theory

Now for the third topic in this section: what can be called

encoding down. The technique is easy to understand. Suppose

that we have on hand a set

It’s tempting to define non-logicist AI by negation: an approach

to building intelligent agents that rejects the distinguishing

features of logicist AI. Such a shortcut would imply that the agents

engineered by non-logicist AI researchers and developers, whatever the

virtues of such agents might be, cannot be said to know that

From the standpoint of formalisms other than logical systems,

non-logicist AI can be partitioned into symbolic but non-logicist

approaches, and connectionist/neurocomputational approaches. (AI

carried out on the basis of symbolic, declarative structures that, for

readability and ease of use, are not treated directly by researchers

as elements of formal logics, does not count. In this category fall

traditional semantic networks, Schank's (1972) conceptual dependency

scheme, and other schemes.) The former approaches, today, are

probabilistic, and are based on the formalisms (Bayesian networks)

covered above. The latter approaches are based, as

we have noted, on formalisms that can be broadly termed

“neurocomputational.” Given our space constraints, only one

of the formalisms in this category is described here (and briefly at

that): the aforementioned artificial neural networks.[14]

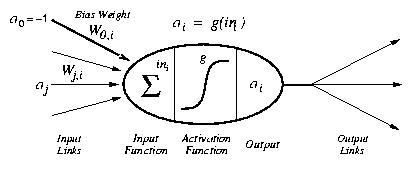

Neural nets are composed of units or

nodes designed to represent neurons, which are connected

by links designed to represent dendrites, each of which has a

numeric

weight.

As you might imagine, there are many different kinds of neural

networks. The main distinction is between feed-forward and

recurrent networks. In feed-forward networks like the one

pictured immediately above, as their name suggests, links move

information in one direction, and there are no cycles; recurrent

networks allow for cycling back, and can become rather complicated.

In general, though, it now seems safe to say that neural networks are

fundamentally plagued by the fact that while they are simple,

efficient learning algorithms are possible, but when they are

multi-layered and thus sufficiently expressive to represent non-linear

functions, they are very hard to train.

Perhaps the best technique for teaching students about neural networks

in the context of other statistical learning formalisms and methods is

to focus on a specific problem, preferably one that seems unnatural to

tackle using logicist techniques. The task is then to seek to

engineer a solution to the problem, using any and all techniques

available. One nice problem is handwriting recognition (which

also happens to have a rich philosophical dimension; see

e.g. Hofstadter & McGraw 1995). For example, consider the problem

of assigning, given as input a handwritten digit d, the correct

digit, 0 through 9. Because there is a database of 60,000 labeled

digits available to researchers (from the National Institute of

Science and Technology), this problem has evolved into a benchmark

problem for comparing learning algorithms. It turns out that

kernel machines currently reign as the best approach to the

problem -- despite the fact that, unlike neural networks, they require

hardly any prior iteration. A nice summary of fairly recent results

in this competition can be found in Chapter 20 of AIMA.

Readers interested in AI (and computational cognitive science) pursued

from an overtly brain-based orientation are encouraged to explore the

work of Rick Granger (2004a, 2004b) and researchers in his Brain Engineering

Laboratory and W.H. Neukom Institute for

Computational Sciences. The contrast between the “dry”,

logicist AI started at the original 1956 conference, and the approach

taken here by Granger and associates (in which brain circuitry is

directly modeled) is remarkable.

What, though, about deep, theoretical integration of the main

paradigms in AI? Such integration is at present only a possibility

for the future, but readers are directed to the research of some

striving for such integration. For example: Sun (1994, 2002) has been

working to demonstrate that human cognition that is on its face

symbolic in nature (e.g., professional philosophizing in the analytic

tradition, which deals explicitly with arguments and definitions

carefully symbolized) can arise from cognition that is

neurocomputational in nature. Koller (1997) has investigated the

marriage between probability theory and logic. And, in general, the

very recent arrival of so-called human-level AI is being led by

theorists seeking to genuinely integrate the three paradigms set out

above (e.g., Cassimatis 2006).

Notice that the heading for this section isn't Philosophy of

AI. We’ll get to that category momentarily. Philosophical AI is AI,

not philosophy; but it’s AI rooted in and flowing from, philosophy.

Before we ostensively characterize Philosophical AI courtesy of a

particular research program, let us consider the view that AI is in

fact simply philosophy, or a part thereof.

Daniel Dennett (1979) has famously claimed not just that there are

parts of AI intimately bound up with philosophy, but that

AI is philosophy (and psychology, at least of the cognitive

sort). (He has made a parallel claim about Artificial Life (Dennett

1998).) This view will turn out to be incorrect, but the reasons why

it’s wrong will prove illuminating, and our discussion will pave the

way for a discussion of Philosophical AI.

What does Dennett say, exactly? This:

Elsewhere he says his view is that AI should be viewed “as a most

abstract inquiry into the possibility of intelligence or

knowledge” (Dennett 1979, 64).

In short, Dennett holds that AI is the attempt to explain

intelligence, not by studying the brain in the hopes of identifying

components to which cognition can be reduced, and not by engineering

small information-processing units from which one can build in

bottom-up fashion to high-level cognitive processes, but rather by --

and this is why he says the approach is top-down -- designing

and implementing abstract algorithms that capture cognition. Leaving

aside the fact that, at least starting in the early 1980's, AI

includes an approach that is in some sense bottom-up (see the

neurocomputational paradigm discussed above, in Non-Logicist AI: A Summary; and see, specifically,

Granger's (2004a, 2004b) work, hyperlinked in text immediately above,

a specific counterexample), a fatal flaw infects Dennett's view.

Dennett sees the potential flaw, as reflected in:

Dennett has a ready answer to this objection. He writes:

Unfortunately, this is acutely problematic; and examination of the

problems throws light on the nature of AI.

First, insofar as philosophy and psychology are concerned with the

nature of mind, they aren't in the least trammeled by the

presupposition that mentation consists in computation. AI, at least

of the “Strong” variety (we'll discuss “Strong”

versus “Weak” AI below) is indeed an

attempt to substantiate, through engineering certain impressive

artifacts, the thesis that intelligence is at bottom computational (at

the level of Turing machines and their equivalents, e.g., Register

machines). So there's a philosophical claim, for sure. But this

doesn't make AI philosophy, any more than some of the deeper, more

aggressive claims of some physicists (e.g., that the universe is

ultimately digital in nature; see http://www.digitalphilosophy.org)

make their field philosophy. Philosophy of physics certainly

entertains the proposition that the physical universe can be

perfectly modeled in digital terms (in a series of cellular automata,

e.g.), but of course philosophy of physics can't be

identified with this doctrine.

Second, we now know well (and those familiar with the relevant formal

terrain knew at the time of Dennett's writing) that information

processing can exceed standard computation, that is, can exceed

computation at and below the level of what a Turing machine can muster

(Turing-computation, we shall say). (Such information

processing is known as

hypercomputation, a term coined by philosopher Jack Copeland,

who has himself defined such machines (e.g., Copeland 1998). The

first machines capable of hypercomputation were trial-and-error

machines, introduced in the same famous issue of the Journal of

Symbolic Logic (Gold 1965, Putnam 1965). A new hypercomputer is

the infinite time Turing machine (Hamkins & Lewis 2000).)

Dennett's appeal to Church's thesis thus flies in the face of the

mathematical facts: some varieties of information processing exceed

standard computation (or Turing-computation). Church's thesis, or

more precisely, the Church-Turing thesis, is the view that a function

f is effectively computable if and only if f is

Turing-computable (i.e., some Turing machine can compute f).

Thus, this thesis has nothing to say about information processing that

is more demanding than what a Turing machine can achieve. (Put

another way, there is no counter-example to CTT to be automatically

found in an information-processing device capable of feats beyond the

reach of TMs.) For all philosophy and psychology know, intelligence,

even if tied to information processing, exceeds what is

Turing-computational or Turing-mechanical.[15] This is especially

true because philosophy and psychology, unlike AI, are in no way

fundamentally charged with engineering artifacts, which makes the

physical realizability of hypercomputation irrelevant from their

perspectives. Therefore,

contra Dennett, to consider AI as psychology or philosophy is

to commit a serious error, precisely because so doing would box these

fields into only a speck of the entire space of functions from the

natural numbers (including tuples therefrom) to the natural numbers.

(Only a tiny portion of the functions in this space are

Turing-computable.) AI is without question much, much narrower than

this pair of fields. Of course, it's possible that AI could be

replaced by a field devoted not to building computational artifacts by

writing computer programs and running them on embodied Turing

machines. But this new field, by definition, would not be AI. Our

exploration of AIMA and other textbooks provide direct

empirical confirmation of this.

Third, most AI researchers and developers, in point of fact, are

simply concerned with building useful, profitable artifacts, and

don’t spend much time reflecting upon the kinds of abstract

definitions of intelligence explored in this entry (e.g., What Exactly is AI?).

Though AI isn’t philosophy, there are certainly ways of doing

real implementation-focussed AI of the highest caliber that are

intimately bound up with philosophy. The best way to demonstrate this

is to simply present such research and development, or at least a

representative example thereof. The most prominent example in AI

today is John Pollock's OSCAR project.

It’s important to note at this juncture that the OSCAR project,

and the information processing that underlies it, are without question

at once philosophy and technical AI. Given that the work in

question has appeared in the pages of Artificial Intelligence,

a first-rank journal devoted to that field, and not to philosophy,

this is undeniable (see, e.g., Pollock 2001, 1992). This point is

important because while it’s certainly appropriate, in the

present venue, to emphasize connections between AI and philosophy,

some readers may suspect that this emphasis is contrived: they may

suspect that the truth of the matter is that page after page of AI

journals are filled with narrow, technical content far from

philosophy. Many such papers do exist. But we must distinguish

between writings designed to present the nature of AI, and its core

methods and goals, versus writings designed to present progress on

specific technical issues.

Writings in the latter category are more often than not quite narrow,

but, as the example of Pollock shows, sometimes these specific issues

are inextricably linked to philosophy. And of course Pollock's work

as a representative example. One could just as easily have selected

work by folks who don't happen to also produce straight philosophy.

For example, for an entire book written within the confines of AI and

computer science, but which is epistemic logic in action in many ways,

suitable for use in seminars on that topic, see (Fagin et al. 2004).

(It is hard to find technical work that isn’t bound up with

philosophy in some direct way. E.g., AI research on learning is all

intimately bound up with philosophical treatments of induction, of how

genuinely new concepts not simply defined in terms of prior ones can

be learned. One possible partial answer offered by AI is inductive

logic programming, discussed in Chapter 19 of AIMA.)

What of writings in the former category? Writings in this category,

while by definition in AI venues, not philosophy ones, are nonetheless

philosophical. Most textbooks include plenty of material that falls

into this latter category, and hence they include discussion of the

philosophical nature of AI (e.g., that AI is aimed at building

artificial intelligences, and that’s why, after all, it’s

called ‘AI’).

Now to OSCAR.

OSCAR, according to Pollock, will eventually be not just an

intelligent computer program, but an artificial person. (Lest it be

thought that this is spinning Pollock's work in the direction of the

stunningly ambitious, note that the subtitle of (Pollock 1995) is

“A Blueprint for How to Build a Person,”, and that his prior

book (1989) was How to Build a Person.) However, though

persons have an array of perceptual powers (effectors that allow them

to manipulate their environments, linguistic abilities, etc.) OSCAR,

at least in the near term, will not have this breadth. OSCAR’s

strong suit is the “intellectual” side of personhood.

Pollock thus intends OSCAR to be an “artificial intellect”,

or, to use his neologism, an artilect. An artilect is a

rational agent; Pollock’s concern is thus with rationality. As to the

roles of AI and philosophy addressing this concern, Pollock writes:

The distinguishing feature of OSCAR

qua artilect, at least so far, is that the system is able to

perform sophisticated defeasible reasoning.[16] The study of

defeasible reasoning was started by Roderick Chisholm (1957, 1966,

1977) and Pollock (1965, 1967, 1974), long before AI took the project